The site uses Cookies. When you continue to browse the site, you are agreeing our use of Cookies.

Read our privacy policy>

![]()

- Products

- Solutions

- Support

- Joint Innovation Platform

- About Us

title

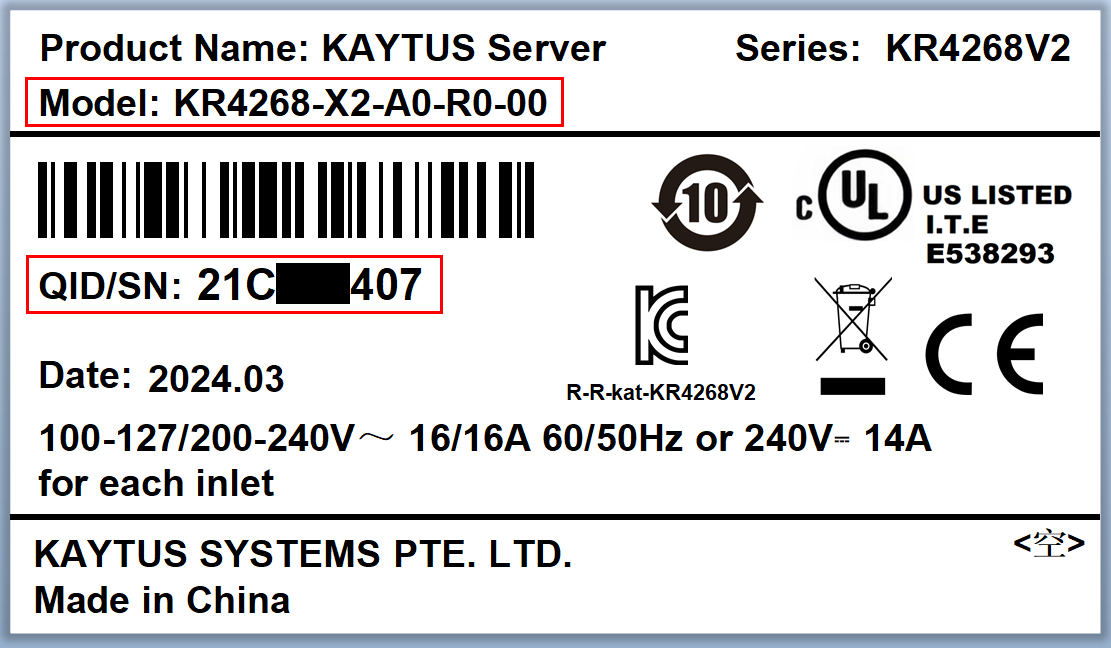

- meta brain® General Purpose Servers

- meta brain® Artificial Intelligence Servers

- meta brain® Edge Computing Servers

- Storage

- Edge computing and the Internet of Things

- Data storage and Management

title

-

Rack and Tower ServersRack-scale ServersMulti-node Servers

-

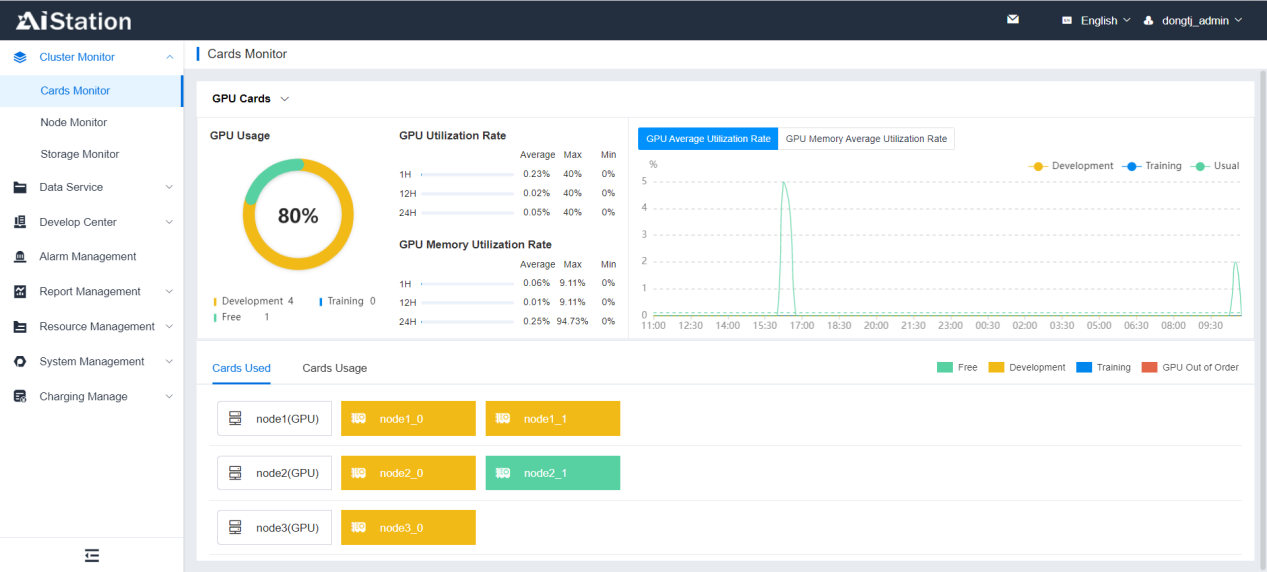

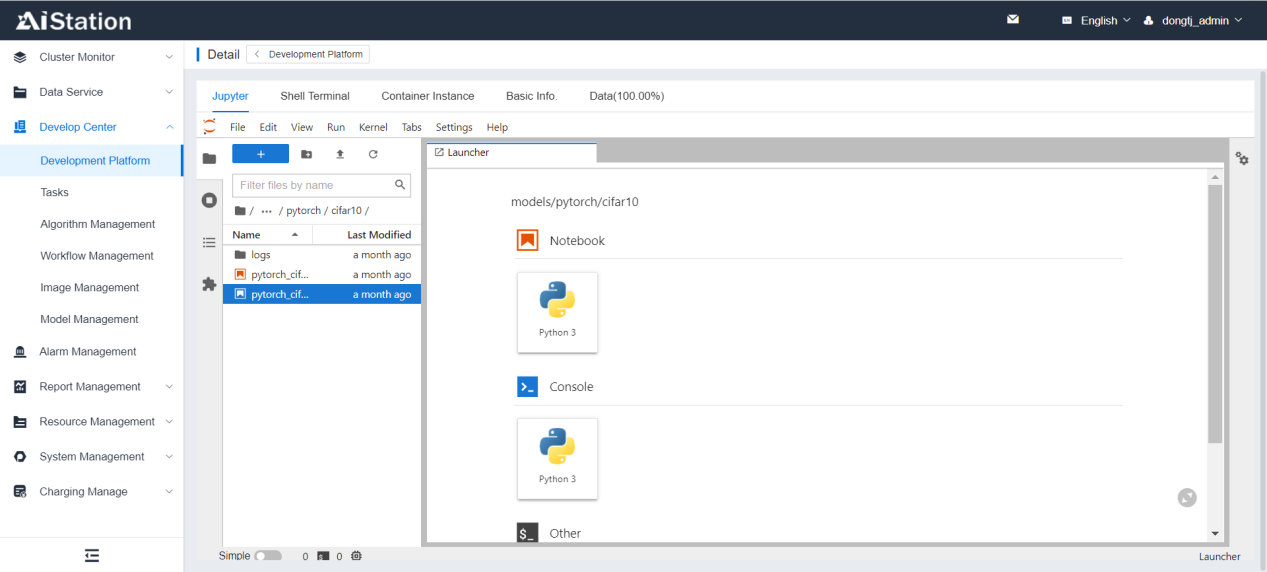

AI ServersAI Software

-

Edge ServersEdge MicroserversPortable Al ServersEdge Micro-Centers

-

Storage>>All Flash StorageHybrid Flash StorageFiber SwitchSSDs

Rely on customer test center, provide customers and partners with convenient testing resources……

How To Buy >>

Contact The Dealer To Purchase>>

If you would like to inquire about products or solutions by phone, please call: 400-691-8711

Partners >>

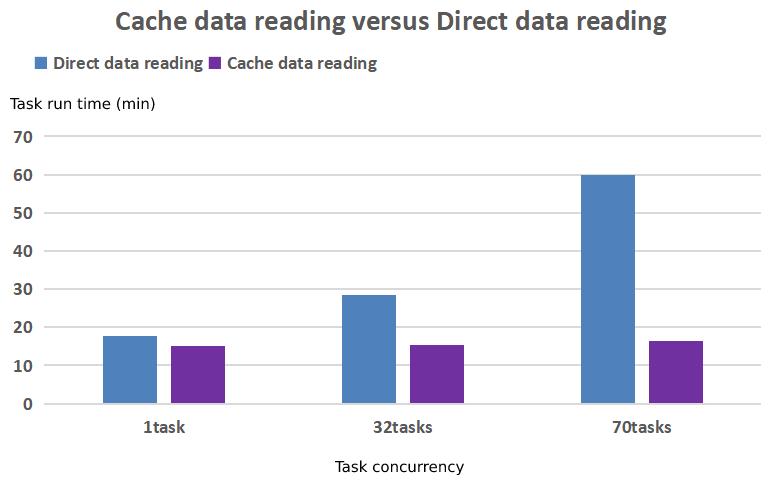

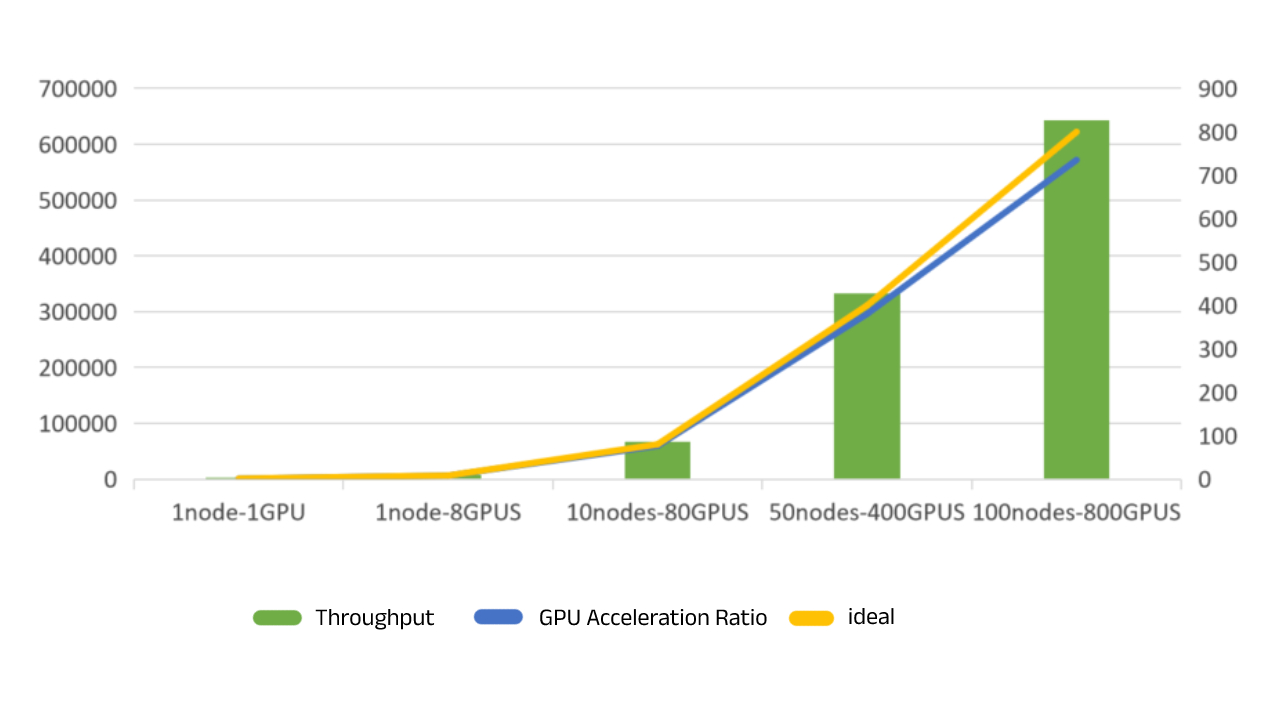

Provide global customers with advanced AI computing power, complete software framework and mature demo demonstrations